I think that is still too broad a statement.

I have paid subscriptions (the $20 a month variety) to ChatGPT, Claude, and Gemini, and I also use Grok and DeepSeek. I work with all of them.

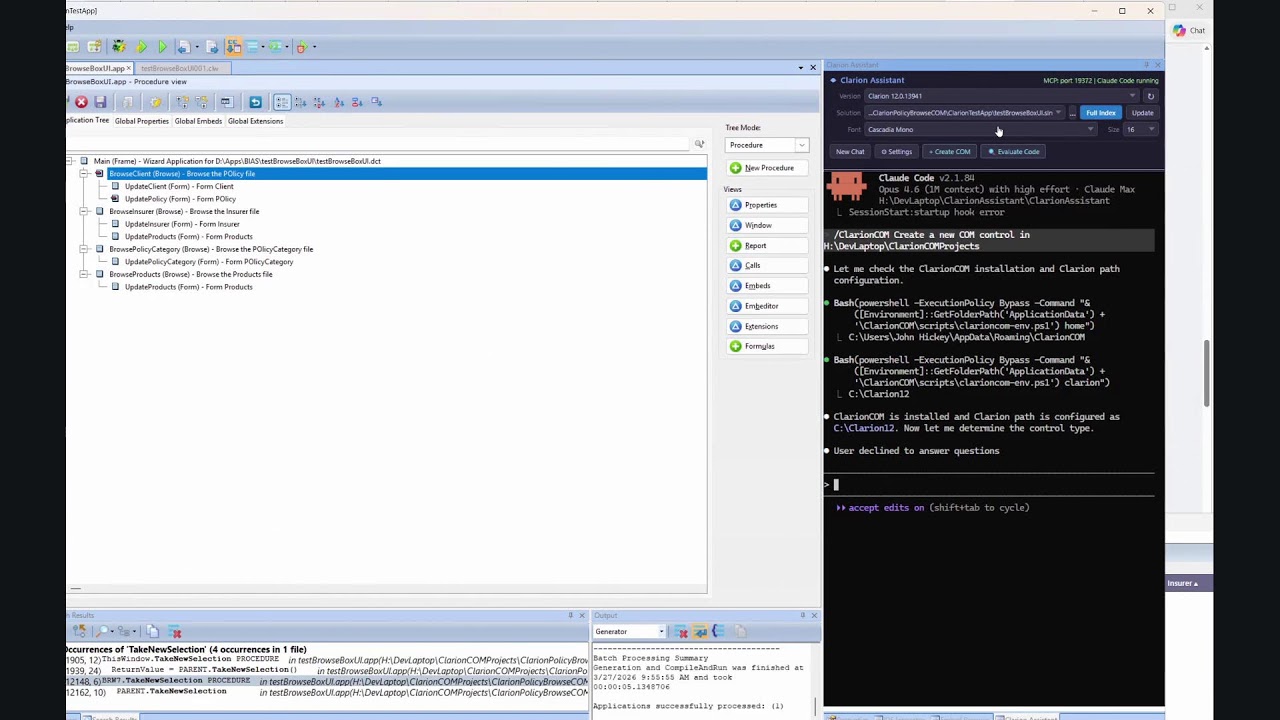

Over the past year, I have written literally tens of thousands of lines of excellent Clarion code with AI assistance.

So I will gladly agree with one narrow point: if someone opens a plain ChatGPT window, asks a simple Clarion question with no context, and expects great code back, they are probably not going to like the result.

But that is not the same thing as saying you need Claude with a $200 a month subscription, or that ChatGPT cannot do first-class Clarion work.

What matters is context, guardrails, and how you ask. If you give GPT the existing code pattern, the surrounding embed or procedure context, the formatting rules, the business rules, the expected result, and the constraints it has to stay within, you absolutely can get first-class Clarion code back. I do it all the time.

To me, that is the real distinction here:

It is not really “Claude versus ChatGPT.”

It is “thin prompting versus disciplined prompting.”

The better tool integrations can absolutely help. I am not arguing otherwise. More repo awareness, better file traversal, better IDE integration, and stronger workflow plumbing are all useful.

But those things are workflow advantages. They are not proof that ChatGPT is somehow incapable of doing good Clarion work.

So I would frame it this way:

- Yes, one-shot AI use in Clarion is often disappointing.

- Yes, niche languages like Clarion need more steering.

- No, you do not have to have Claude to get good results.

And no, I do not think it helps the conversation to imply that ChatGPT is somehow disqualified from serious Clarion work.

Used casually, it can disappoint.

Used properly, it can produce excellent work.

Those are two very different claims.